In this blog, we will be going through Difference between ASIC and FPGA.

Difference between ASIC and FPGA

ASIC and FPGA, fundamentally, are both types of chips. ASICs are fully customized chips with fixed functionalities that cannot be altered. On the other hand, FPGAs are semi-custom chips with flexible functionalities and high versatility.

We can illustrate the difference between the two using an example. ASICs are like making toys with molds. It requires pre-molding, which is quite labor-intensive. Once the molding is done, there's no way to modify it. If you want to make a new toy, you have to create a new mold. On the contrary, FPGAs are like building toys with LEGO bricks. You can start building right away, and with a little time, you can finish building. If you're not satisfied or want to build a new toy, you can dismantle it and start again.

Difference between ASIC and FPGA: Design

Many design tools for ASIC and FPGA are similar. In the design process, FPGA is less complicated than ASIC, as it eliminates some manufacturing processes and additional design verification steps, roughly comprising only 50%-70% of the ASIC process. The most daunting wafer fabrication process is unnecessary for FPGA. This implies that developing ASICs may take several months or even more than a year. However, FPGAs only require a few weeks or months.

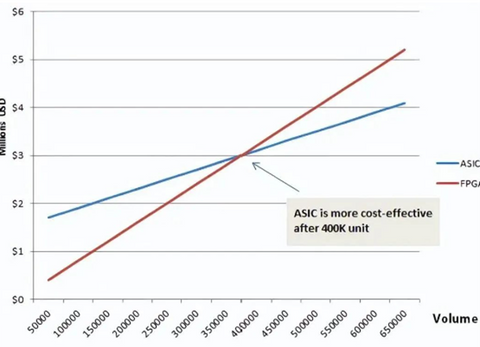

Difference between ASIC and FPGA: Cost

Earlier, it was mentioned that FPGAs do not require wafer fabrication. Does this mean that FPGAs are always cheaper than ASICs? Not necessarily. FPGAs can be pre-fabricated and programmed in a laboratory or on-site, eliminating the need for Non-Recurring Engineering (NRE) costs. However, as a "general-purpose toy," its cost is ten times higher than that of ASICs (molded toys). If the production volume is low, FPGAs will be cheaper. If the production volume is high and the one-time engineering costs of ASICs are amortized, then ASICs will be cheaper. It's similar to the cost of molds. Molds are expensive, but if the sales volume is high, the cost becomes worthwhile.

Total costs ASIC vs FPGA including NRE in MUSD

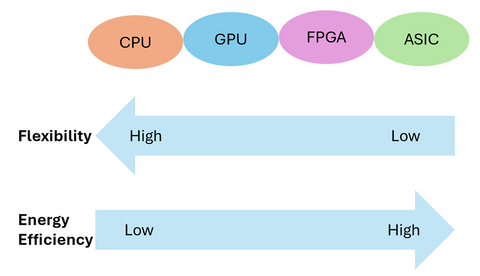

From the perspectives of performance and power consumption, ASICs are indeed stronger than FPGAs as specialized custom chips. FPGAs are general-purpose reconfigurable chips with a lot of redundancy. No matter how you design them, there will always be some excess components. As mentioned earlier, ASICs are tailored closely without much waste and utilize hard wiring. Therefore, they offer higher performance and lower power consumption. FPGAs and ASICs aren't simply in competition or substitution with each other; they serve different purposes.

Difference between ASIC and FPGA: Applications

FPGAs are commonly used for product prototyping, design iterations, and certain low-volume specific applications. They are suitable for products with short development cycles. FPGAs are also frequently used for ASIC validation. ASICs are employed for designing large-scale, high-complexity chips or mature products with significant production volumes. FPGAs are particularly suitable for beginners to learn and participate in competitions. Many universities offering electronics-related courses use FPGAs for teaching. From a commercial perspective, FPGA's primary application areas include communication, defense, aerospace, data centers, healthcare, automotive, and consumer electronics.

FPGAs have been used in the communication sector for quite some time. Many base stations utilize FPGA-based chips for tasks such as baseband processing, beamforming, and antenna transceivers. They are also used for encoding and protocol acceleration in core networks. Previously, FPGAs were also used in components like DPUs in data centers. However, as many technologies matured and became standardized, communication equipment manufacturers began replacing FPGAs with ASICs to reduce costs.

It's worth mentioning that in recent years, the popular Open RAN technology often utilizes general-purpose processors (such as Intel CPUs) for computation. This approach consumes far more energy compared to FPGA and ASIC solutions. This is one of the main reasons why companies like Huawei are hesitant to adopt Open RAN. In the automotive and industrial sectors, the focus is mainly on FPGA's latency advantages, so they are used in ADAS (Advanced Driver Assistance Systems) and servo motor drives. FPGAs are used in consumer electronics because of the rapid iteration of products. The development cycle of ASICs is too long, by the time the product is ready, the market opportunity may have passed.

AI applications of ASIC and FPGA

From a purely theoretical and architectural perspective, ASICs and FPGAs undoubtedly outperform CPUs and GPUs. CPUs and GPUs follow the von Neumann architecture, where instructions need to go through steps like storage, decoding, and execution, and shared memory undergoes arbitration and caching during usage.

However, FPGAs and ASICs do not adhere to the von Neumann architecture (they follow the Harvard architecture). Taking FPGA as an example, it essentially operates on an instruction-less, non-shared memory architecture. The functionality of FPGA's logic units is determined during programming, essentially implementing software algorithms in hardware. For the need to store state, registers and on-chip memory (BRAM) in FPGA belong to their respective control logic and do not require arbitration and caching.

In terms of the proportion of ALU arithmetic units, GPUs surpass CPUs, and FPGAs, due to the absence of control modules, all modules are ALU arithmetic units, surpassing GPUs. Therefore, considering various aspects, the computational speed of FPGAs will be faster than GPUs.

Now let's look at power consumption. GPU power consumption is notoriously high, with single chips reaching up to 250W, even 450W (RTX4090). In contrast, FPGAs generally consume only 30~50W. This is primarily due to memory access. GPU memory interfaces (GDDR5, HBM, HBM2) have extremely high bandwidth, approximately 4-5 times that of traditional DDR interfaces in FPGAs. However, in terms of the chip itself, the energy consumed to access DRAM is over 100 times that of SRAM. The frequent DRAM access by GPUs results in high power consumption. Additionally, the operating frequency of FPGAs (below 500MHz) is lower than CPUs and GPUs (1~3GHz), which also contributes to lower power consumption in FPGAs. The lower operating frequency of FPGAs is mainly due to constraints on routing resources. Some lines need to travel longer distances, and increasing the clock frequency may result in timing issues.

Lastly, let's consider latency. GPU latency is higher than FPGA latency. GPUs typically need to divide different training samples into fixed-size "batches" to maximize parallelism, requiring several batches to be collected before processing them together. FPGA architecture is batch-less. Each processed data packet can be outputted immediately, providing latency advantages.

Now, the question arises: if GPUs lag behind FPGAs and ASICs in various aspects, why are they still the hot favorite for AI computing? The answer is simple: under the extreme pursuit of computational performance and scale, the industry currently doesn't care much about cost and power consumption. Thanks to NVIDIA's long-term efforts, the number of GPU cores and operating frequencies have been continuously increasing, and chip size has been expanding, leading to robust computational power. Power consumption is managed through fabrication processes and passive cooling methods such as water cooling, with the focus on avoiding overheating.

Besides hardware, NVIDIA's strategic focus on software and ecosystem has also been mentioned. CUDA, developed by NVIDIA, is a core competitive advantage of GPUs. Based on CUDA, beginners can quickly get started with GPU development. NVIDIA has cultivated this ecosystem over many years, forming a solid user base. In comparison, the development of FPGAs and ASICs is still too complex to be widely adopted.

In terms of interfaces, although GPU interfaces are relatively single (mainly PCIe) and lack the flexibility of FPGAs (FPGAs' programmability allows them to easily interface with any standard or non-standard interface), they are sufficient for servers and can be used as-is. Apart from FPGAs, ASICs are also lagging behind GPUs in AI primarily due to their high cost, long development cycles, and significant development risks. With AI algorithms evolving rapidly, the development cycle of ASICs becomes a major limitation.

Considering the above reasons, GPUs have achieved their current success. For AI training, the powerful computational power of GPUs can significantly improve efficiency. For AI inference, which typically deals with individual objects (such as images), the requirements are lower, and parallelism isn't as critical. Hence, the computational power advantage of GPUs isn't as pronounced. Many enterprises may opt for cheaper and more power-efficient FPGAs or ASICs for these tasks.

The same applies to other computational scenarios. Those emphasizing absolute computational performance prefer GPUs, while those with less demanding requirements may consider FPGAs or ASICs, opting to save on costs where possible.

Read our other blogs on FPGA:

- https://www.eimtechnology.com/blogs/articles/what-are-fpgas-field-programmable-gate-arrays-used-for

- https://www.eimtechnology.com/blogs/articles/learning-kit-for-digital-circuits-and-fpgas

https://www.eimtechnology.com/collections/all-products/products/step-fpga-development-board

Check our Digital circuit + FPGA Learning Kit: https://www.eimtechnology.com/collections/all-products/products/fpga-digital-electronics-digital-circuits-learning-kit